“Un mariage Novell-Mandriva ?”

Lu à l’instant sur l’informaticien : “John Dragoon, VP et Responsable marketing monde de Novell : Certains aspects de l’entreprise Mandriva sont intéressants, c’est indéniable. Nous avons beaucoup de respect pour sa technologie, mais ce n’est pas cela qui pourrait nous intéresser”. Lire l’article. Heureusement que les américains sont là pour le business avec Linux.

Linux, Windows or both? Doesn’t matter to virtual desktop vendor Ulteo

Ulteo is poised to offer commercial support for its free virtual desktop infrastructure software, which the open-source start-up says will cost companies a fraction of established offerings from Citrix, Microsoft and VMware. Read the Computer World article.

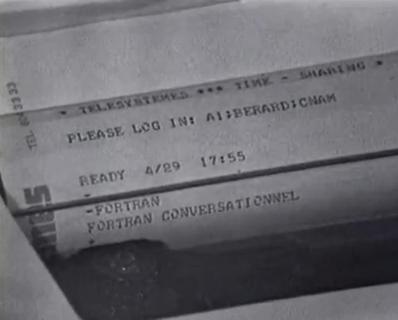

It didn’t change that much since… 1970! Ca n’a pas changé tant que ça depuis 1970 !

Running Ulteo Open Virtual Desktop in the clouds with Amazon’s EC2

Thanks to experimentations by Prabhu Ramamoorthy and the nice work of Phil Lavigna, everyone can now use Ulteo OVD in the clouds with Amazon EC2. Just watch the two video tutorials at http://www.ulteoadmin.com/! Note: the EC2 Ulteo OVD image will run only on EC2 USA. If you plan to use it on EC2 EU, you have to register the image in EC2 EU. In that case, please let us know so I can post the news.

Electronic autodafé or Why books should remain printed on paper

Tempted about Amazon’s Kindle? maybe you should have a look at this story: “Amazon Erases Orwell Books From Kindle“. There is a word for that, at least in French: it’s an “autodafé”. It reminds dark parts of our recent history. However, burning books is really less convenient and takes much more time than erasing electronic books. So I guess I’ll stick with plain paper books.

Google Chrome OS

So… what I told yesterday evening to a good friend of mine has just been announced: Google is to release their “own” operating system called Chrome OS. In short: Linux kernel, simple interface, all apps on the web, better security, very fast boot. This is likely to be much like gOS actually. (I’m sad for them, it seems they have missed a Google buyout they were obviously expecting). So in the future it’s very likely that Microsoft Windows and Mac OS are going to be challenged the hard way on the mainstream market. But I was wrong yesterday because I predicted to my friend that the Google OS would be nothing else than Google Android. No: instead they have choosen a luxurious way to get the best from Google’s own development team by entering into a kind of self-competition, or maybe better to call that internal competition. Now, here are a few things that come to mind:

So… what I told yesterday evening to a good friend of mine has just been announced: Google is to release their “own” operating system called Chrome OS. In short: Linux kernel, simple interface, all apps on the web, better security, very fast boot. This is likely to be much like gOS actually. (I’m sad for them, it seems they have missed a Google buyout they were obviously expecting). So in the future it’s very likely that Microsoft Windows and Mac OS are going to be challenged the hard way on the mainstream market. But I was wrong yesterday because I predicted to my friend that the Google OS would be nothing else than Google Android. No: instead they have choosen a luxurious way to get the best from Google’s own development team by entering into a kind of self-competition, or maybe better to call that internal competition. Now, here are a few things that come to mind:

- Linux kernel, fast boot: OK, there are a few people who already know how to do that, and actually do that for years

- (Web) Applications on the web (say “in the clouds”, it’s more fashionable): OK… what else? Windows applications ? no. Linux applications? maybe since that’s Linux kernel. Hmmm.

- Minimal user interface: ouh ouh! IceWM?

- “redesigning the underlying security architecture of the OS so that users don’t have to deal with viruses, malware and security updates”: I think there are a couple of guys around here on the planet who have been using such operating systems daily, for at least… 15 years (ouch… white hair is not far).

- What else…? … hardware support! plug and play your XYZ too cool €15 device, and see what happens. I’m curious to see what they do about this part, really curious.

So, OK, I’m a bit harsch, rather amused actually, with this announce, because you know, to me it’s just a minimal Linux system with a browser on the top of it, and I understand that this announce is important and is catching a lot of attention because there is a company with 6 letters starting by a G behind it. And it’s really possible that they catch a significant part of the OS market, for sure. Anyway, I’m not too convinced about the lack of support for Windows apps, I think they may be a little too confident about the capabilities of web-based applications, but this may change in the next three to five years. And of course I can’t wait to see what they do about the support of zillion hardware devices that come with a Windows driver on a CD to make them work. Stay tuned!

Open Virtual Desktop v1.0 out w/support for both Win and Linux apps!

Look at Ulteo homepage

IP Traffic Shaping on Linux

Recently I got interested in traffic shaping to simulate various bandwidth capacities. It was a headache to find a working software in that field until I realized that 1) it was easy 2) it was straighforward on Linux kernel since version (2.2.x?).

First of all, you need to modrobe a few kew kernel modules: cls_u32, sch_cbq, ip_tables

Then all you have to do is to use the “tc” utility which is part of the iproute package.

For instance, let’s assume that you want to limit incoming and outgoing traffic to 256kbits/s on your local host, and assuming that you have a 100Mbps capable network interface on eth0, what you have to do is:

# tc qdisc add dev eth0 root handle 1: cbq avpkt 1000 bandwidth 100mbit

# tc class add dev eth0 parent 1: classid 1:1 cbq rate 256kbit allot 1500 prio 5 bounded isolated

# tc filter add dev eth0 parent 1: protocol ip prio 16 u32 match ip dst 0/0 flowid 1:1

Then if you want to change the limit, use “replace” instead of “add” in the second command. For instance:

# tc class replace dev eth0 parent 1: classid 1:1 cbq rate 64kbit allot 1500 prio 5 bounded isolated

You will notice easily that it’s doing the job very well.

Anyway, I went into some troubles when I started to monitor the traffic: the bandwidth that I set with tc doesn’t fit at all with the actual limitation. For instance, when setting 50kbit in tc, I get a real limitation of around 24 *kBytes* per second, which is about 200kbps. At first, I thought it was a problem with “knetdockapp” that I’m using to monitor the traffic. So I used Bandwidthd which shew similar results, and finally, I transferred a big file during 60 seconds and calculated the real rate from the number of bytes that were received. The results were still the same.

So I’m still wondering why there is such a difference between the figure provided to tc and the real shaped bandwidth.

Ulteo unveils the first Open Source virtual desktop, providing businesses with quicker, cheaper deployment and easier applications management

Following its commitment to desktop virtualization solutions, Ulteo, an Open Virtual Desktop Infrastructure company, announced today that they were releasing the first version of their Open Virtual Desktop solution for enterprises. Delivering faster deployment times and ease of management for the IT department, this first release can be integrated easily into an existing professional Linux or Windows IT environment. The solution can be up and running in a few minutes, delivering rich desktop applications to corporate users.